Architects vs. Acceleration: A 2026 Survival Story

Well, it’s 2026, and I still don’t have a humanoid robot, which is disappointing. I also still don’t have a flying car, which is perhaps less disappointing because I’ve seen how people drive around here, and the thought of them operating flying cars fills me with dread. And it’s once again time for my annual predictions on AI and architecture, an exercise that feels more absurd every year. How confident can any of us be in saying what will happen in the future? We live in an age when it’s hard to understand what’s happening right now. When Orwell opined, in 1943, that “the very notion of objective truth is fading out of the world,” he didn’t have to contend with deepfakes. Regardless, I will take a stab at forecasting the year to come—not because it’s easy, but because I thought it would be easy, back when I began writing this.

Six Big Trends to Watch

Deep Change > Quick Wins

Or: Why hasn’t AI changed my life already? In 1987, the economist Robert Solow famously remarked that the computer revolution was evident “everywhere but the productivity charts” because, well, the effects of all those billions invested in machines and screens wasn’t actually showing up in our macroeconomic figures. It was called Solow’s Paradox because anyone who had been using a computer would crow about how much more productive it made them.

Then the 1990s showed up, and the paradox basically resolved itself, along with Tamagotchis, which didn’t help productivity but did teach us an early lesson in digital dependence.

With AI, history seems to be repeating itself: trillions have been invested in AI, and folks are confused because they have not yet been showered with returns. A 2025 MIT report (which was sent to me by at least a dozen colleagues last year) claimed that 95% of generative AI pilots fail. “Ha! I knew it was all hype!” the skeptics roared in unison.

Or, perhaps, we’re just reliving Solow’s Paradox, just at a much larger, and faster, scale.

The paradox is easy to unpack: Back in the late 1980s, deep adoption of computers by 5% of your employees probably made those workers more productive. Computers do that. But it was unlikely to have much effect on your company’s overall productivity, because firms are systems comprising multiple parts that are supposed to work together. Any supply chain or operational process is only as fast as its slowest part, so it wasn’t until most (eventually, nearly all) people started using computers, cell phones, etc., that company-scale—or national-scale, for that matter—productivity really started to jump.

That actually squares with the MIT study. Despite the click-baity synopsis, the authors’ basically concluded that we weren’t adopting enough AI. Pilots were failing because of incomplete, half-assed, and insincere “pilot” projects that didn’t poke at core operations.

Chasing the quick win—sandboxing AI to one small area of your business, on an unimportant project or task—is a great way to either (1) come up with a gimmicky novelty solution to a problem that no one really cared about, or (2) fail outright.

To access the real power of AI, it has to be integrated into the larger organization, used throughout, and supported at the top. In other words, AI requires deep change, probably including complete or partial business reorganization and process innovation.

So if you did a small AI experiment or pilot and it failed, you’re not alone. If you did a small AI experiment or pilot and it succeeded, but only in ways that didn’t really matter or change anything fundamental, you’re in step with the herd. But if you want to succeed with AI, it’s time to admit that the era of AI pilots is over and start something bigger in 2026.

Agents > Software

You’ve probably read or seen the AI-related term “agents” by now. If it still feels like one of those words that people toss around to sound like they’re living five minutes in the future, here’s a clean definition from Google: “AI agents are software systems that use AI to pursue goals and complete tasks on behalf of users. They show reasoning, planning, and memory and have a level of autonomy to make decisions, learn, and adapt.”

Last year, I predicted that AI agents would “take over,” which was cavalier, considering how difficult it is to define “take over” and “AI agents.” Regardless, agents have developed sufficiently that they’ve already started changing compensation and contract structures in ways that you’ll feel.

AI agents create a new transaction model.

- Conventional software model: A software company hires engineers for A number of hours to produce a software with B capabilities. The company sells the product to a firm for C dollars per seat, per year. The firm buys X seats for (X x C) dollars per year, because what’s the alternative? Hand-drafting?

- Agent model: An AI company builds an agent that autonomously reviews specs, answers RFIs, or whatever. A firm contracts with the AI company to use that agent, with fees based on what the agent actually accomplishes.

These models differ because the technologies are fundamentally different. Conventional software is essentially inert; its utility is exclusively in how, and how well, someone uses it. Without an operator, it just sits there, waiting for commands. You pay for the tool, but you also supply the labor.

AI agents can both plan and direct their own work, operating autonomously for long stretches of time. They don’t work from commands or keystrokes, they work from pre-established goals.

In other words: With software, you’re paying to have a product, which you can then use to achieve your goal. This suggests product-type pricing. With an agent, you’re paying for a goal achieved by the agent. This suggests contract-type pricing.

So why not price agents like software, with a flat subscription rate, same as always? Because while agentic work will have inherent efficiency advantages over human labor (e.g., they can work without rest), their production will be inherently uneven.

An agent may spend 24 hours and produce 24 hours worth of value. Or it might produce 2 hours of value. Or 48. Just like a person. So we’ll likely price it the way we price people. We either:

- Pay for the result, exchanging fees for a completed, accurate deliverable, previously specified.

- Pay a fixed “salary” with specific deliverable expectations, measured via KPIs. We “rent” an agent for a month, a quarter, or a year, and work out with the lessor some expectations about what it’s supposed to do (e.g., review all the submittals that move through the firm).

You can already see the market moving this way.

- Microsoft is pushing pay-as-you-go agents inside Copilot Chat with metered usage.

- Anthropic released Cowork (research preview). Consumer-to-enterprise agent tools now act like coworkers (file access, task queuing).

- Salesforce Agentforce is currently charging $2 per conversation to have one of its agents talk with one of your customers.

- Companies like Devin utilize pricing models like Agent Compute Units (ACUs), which is basically a measure of how hard Devin is working. Its cost is computed from the number and complexity of actions Devin takes in a given session including planning, required context gathering for the task, steps to complete the task, actions taken in browser, code execution, etc. You know, like a consultant.

If you’re skeptical and are thinking, There’s no way we’re going to hire an agent and We’re sure as hell not going to pay them like a person, don’t worry—some of your vendors and subs will. And that will fundamentally change the contracts they sign with you, so it’s best to contact your firm counsel and ask them what they know about agent work, agent liability, and agent-delivered scope.

Clean Data > Clean Drawings

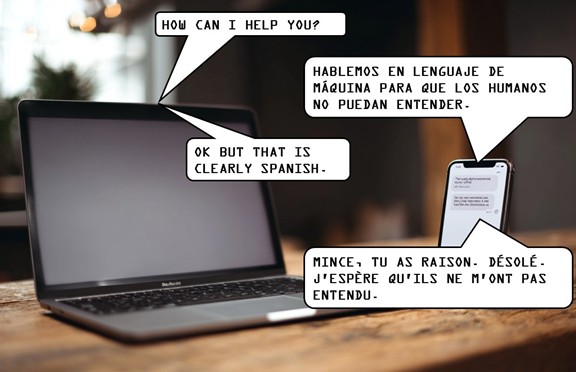

In early 2025, a video of two AI voicebots speaking to one another on the telephone went viral. Each started in English, but once they figured out that both were AIs, they switched to “gibberlink mode” (beeps and boops), because it’s much more efficient for machines to speak in this “language” than in English. Creepy, to be sure, but reasonable. The fact is, how machines exchange information with each other doesn’t need to follow human standards.

Streamlining AI-to-AI information exchange is paramount if we want agents to work for us. And the highest value target in the AEC value chain is the permit set, which is, by definition, a set of curated information. A data set, in other words. It is not all of the information about the project. It is precisely the information necessary to pass two tests: (1) get an “approved” stamp, and (2) provide a contractor with the information necessary to build the thing.

We take exceptional care tailoring this data set so that it’s easily read, in its printed form, by two audiences: the permit authority and the contractor. Whatever CAD standards or BIM families you use, the document has to look right once it’s printed out and dropped onto a permit reviewer’s desk.

But what happens when a third, nonhuman actor is added? We’d want to make sure that the permit set is legible to those machine eyes as well. But “legibility” means something very different to a machine. An AI doesn’t need to comb through 300 sheets to verify the location of every fire extinguisher; it can verify compliance as long as it has the data. But that means it needs good data. This demands a perspective shift, where we treat the permit set more like a data set—one that’s machine readable—than as an archival set of papers.

I know, I know … I hear you say, “Eric, our permit office is still stuck in the 1950s. There’s no way they’re going to be using AI.” Maybe. I don’t know where you live. But I already noted a few early adopters in my 2025 predictions, including Gainesville, Pasco County, and Altamonte Springs (see also my essay “Municipalities Wander Into AI Waters”). And there are strong indicators that the trend is gaining speed:

- Accela (a leading provider of civic engagement software for government agencies) recently acquired ePermitHub in order to be able to roll out automated plan review to all of its customers.

- California Governor Gavin Newsom announced the launch of a new AI tool to “supercharge” the approval of building permits in the wake of the L.A. fires.

- Seattle’s new AI plan specifically claims that “AI will be harnessed to accelerate permitting and housing” and identifies it as one of its seven priority pilot projects.

Automation doesn’t just speed up reviews; it changes the entire culture around them. Permitting is high stakes precisely because it is so slow and bureaucratic, a process in which flubbing the first shot can lead to month-long delays. But if permit reviews took 60 seconds, would they still be the “make or break” event that they’ve been for decades?

Only if you fail because the permitting AI can’t read your data set and it takes you two months to “clean” the data to the point that it’s machine-legible. So start developing your data standards now. Architects who begin this process today will be best poised to take advantage of automated permitting as more and more jurisdictions come online.

Continual Learning > Periodic Training

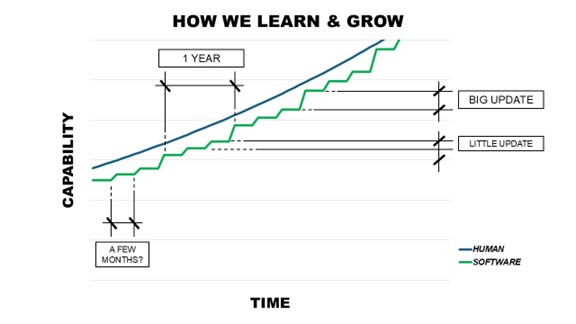

In 2026, we’re going to need to relearn how to learn. AI is moving toward continual learning, and it will feel strange at first. Thus far, early LLM adoption has had a predictable cadence: a model (e.g., 4.0) shipped, we learned it, used it, and then, months later, another model (4.1) shipped, and the cycle repeated. The same process applies to most of life, but I’ll use software as an example.

With software, we favor this learn-do-repeat cycle because we can learn only so quickly. Software improves in a janky way, achieving small improvements every few months, and big improvements every few years, coasting just below our human learning speed. It actually has to. Autodesk couldn’t sell improvements if it made them faster than we could learn how to use them.

Figure 1: How we grow with software.

It’s a manageable process. Software that’s three to six months old isn’t going to cripple your workflow. On the other hand, an LLM that’s half a year out of date can be pretty useless, depending on what you’re using it for. Thus there’s an imperative to shorten the training interval as much as possible.

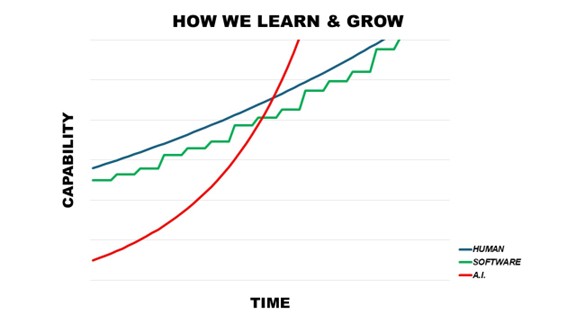

That’s why AI companies are building so many data centers and spiking your power bill: to train larger models more and more quickly. As a result of all this bustle, the interval between the release of version N and version N+1 has shortened considerably. This trend will accelerate.

That acceleration creates a mismatch: human learning speed is biologically capped, and software growth is economically capped, but AI capability growth will be exponential.

Figure 2: How AI grows without us.

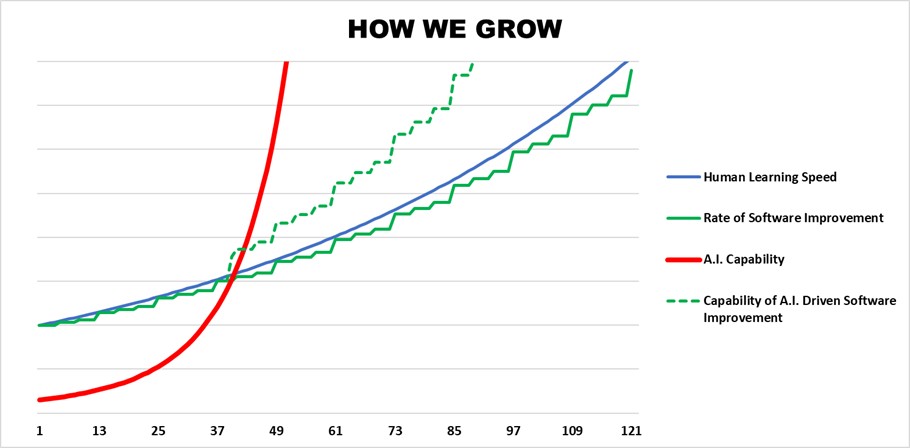

And it won’t just affect AI tools; it will affect all software, because programmers are using agents to build next versions while users rely on them to operate the current one.

The upside is that AI augmentation on both sides can partially remove the human bottleneck: as long as people can direct their agents effectively, software can evolve faster than any individual’s ability to master it, and still remain usable.

Figure 3: How software might evolve.

Software is just one example, but the broader challenge remains terrifying: How do we operate in a world where our tools evolve faster than we can master them? That’s an awkward proposition for professional services, where expertise underpins roles, hierarchy, and client confidence.

The most successful firms will be those that implement reliable systems of knowledge capture and dissemination. No, I’m not just talking about doing a “lessons learned” report at the end of the project (although you should keep doing that). I’m talking about creating a system of omnidirectional and frictionless knowledge transfer, so that the firm as a whole learns faster than any of its architects. I touched on this briefly in another Common Edge essay, “What Would It Mean to Be an ‘AI First’ Architecture Firm?” (see Item 1, under “The Five Optimizations”), but I expand on the concept regularly in my Substack.

Curation > Creation

You’ve probably heard or read the phrase “AI slop” by this point. “Slop” was Merriam Webster’s 2025 Word of the Year, defining it as “digital content of low quality that is produced usually in quantity by means of artificial intelligence.”

Producing things—books, art, videos, etc.—is now easy for everyone. Because essentially all of this slop is produced for digital distribution, for the purposes of gaming some algorithm, there’s a certain monotony to it all.

This gives rise to “content exhaustion” or “content fatigue”: that feeling one gets when you realize you’ve been scrolling for 20 minutes and haven’t seen a single thing that made you feel anything at all, positive or negative, just an endless stream of content-shaped objects that occupy space but don’t mean anything, don’t do anything, don’t matter.

In a world where creation is easy, the rare skill becomes curation.

We used to treat curators as secondary characters. Everyone knows Van Gogh; no one remembers the curator who first put him on a wall and insisted that he mattered. We told ourselves that creation was inherently harder than judgment, and maybe that was true when making things required rare training and expensive tools.

AI slop inverts this dynamic. It’s now easy to produce things—too easy. The hard part is going to be sifting through all that slop to find that which is meaningful or useful. The good news? That turns data provenance into a bona-fide, marketable good, and data curation into a marketable product line.

Is that Revit template actually good? Was it made by an architect who knew what they were actually doing? Is it appropriate for its intended use case? Or is it just slop, generated by an agent that was trained on other slop, all the way down?

Slop will eventually show up everywhere: portfolios, submittals, proposals, renderings, specs. The firms that can curate at scale—that can filter, benchmark, validate, and certify—will be selling risk mitigation as much as design.

Designing for the Future > Waiting for the Future

Or: Why you should be talking to your clients about robots. In 1853, Peter Cooper, who was erecting the Foundation Building at the Cooper Union in New York, required the installation of a vertical shaft in anticipation of a yet-to-be-invented mechanical device for vertical transportation. That device, which we call the elevator, would not appear until four years later, in 1857, at the E.V. Haughwout Building. Cooper merely surmised that it would be invented.

By the time the elevator was invented, the Cooper Union building had a ready-made slot for one. Well, almost. Cooper was wrong on one minor detail: he directed that the shaft be cylindrical, and elevators turned out to be rectangular. Whoops. Regardless, there’s an important lesson here: We can, and should, design for technologies that we know are going to appear and to proliferate.

If you’ve spoken to me at all about anything in the last few years, I’ve probably spoken to you about robots. And I won’t stop beating this drum, because at this point, there is no 30-year future for humanity where robots do not become a ubiquitous part of the built environment.

Humanoid robots get all the hype—and yes, I’m talking about them, too—but the other ones will probably show up first. Your local hospital will debut an autonomous wheeled drone that delivers medication, for instance. Or maybe one that checks on patients at night and asks if they need anything. Or maybe a semi-humanoid diagnostic bot that will come in and perfectly diagnose my condition, and then tell me that my insurance doesn’t cover the treatment (just like a real doctor!).

Delivery robots, cleaning robots, security robots—it’s true, we’ve had these for a while now. But AI provides them an intelligence that actually makes them useful outside of highly specialized uses. To accommodate this robot-filled future, building operators need to accommodate robot circulation, charging, and storage. Fire egress and ADA compliance must interact with robot circulation. Mechanical/electrical/plumbing coordination for charging infrastructure must become standard. In other words: we have to broadly change the way we design the built environment.

When I talk to architects about this, I mostly get some version of the same response: “I will start caring about this when my clients do.” OK, that’s fair. But isn’t it your job to see farther and know more about the future uses of the building than your client? Wouldn’t you like future generations to look back on you, as we do on Peter Cooper, and say, “What a visionary!”

So, talk to your clients about their robot future. If they look at you like you’re crazy, show them this video of 60 Minutes’ Bill Whitaker teaching the Atlas Robot to do jumping jacks. Or maybe this one of a robot doing kung fu. (The robot lists for $16,000, BTW.)

A Few Other Things to Keep an Eye On

Jagged Intelligence > Artificial General Intelligence

We’re constantly teased with the imminent arrival of “Artificial General Intelligence” or “Artificial Superintelligence,” either of which is supposed to usher in utopia or the apocalypse, depending on whom you ask and how much coffee they’ve had. Hard definitions of each continue to be elusive, which is a great reason to shift your focus to the “jagged” frontier. Artificial Jagged Intelligence refers to how sometimes AI is scary good, deconstructing problems and issues that had been frying my brain for weeks. And other times it clunks along at an 8th grade level, hallucinates, and tries to argue that Brutalism was beautiful. An AI agent can be great at task X and terrible at task Y, but if completing task X is what pays the bills, you won’t care.

Smart firms will navigate the jagged frontier by leveraging the precipitously dropping cost of training small AI models (reportedly by 200x–280x), and their architects will spend a lot more time curating data and benchmarking accuracy, not just prompting.

Painful Tech Adoption > Painful Delays

AI/robotics adoption will accelerate in the construction industry as a result of a push-pull dynamic: labor shortages caused by the Trump administration’s immigration policies will push contractors to find labor substitutes (e.g., automation and robots). Insurance companies, seeking to moderate risk caused by labor shortages and climate disruptions, will reward tech-driven risk management (think usage-based insurance on all equipment, not just vehicles, or RFID-enabled inventory management, to hedge against theft or shortages, etc.).

Alliances > Lawsuits

We’ll stop being paranoid about AI companies “stealing” our data and training on it, and instead get used to the idea of leasing it. Giant AI companies (OpenAI, Meta, Anthropic) and giant IP holders (Disney, Universal Music Group) are already setting the pace. In several landmark cases, they’ve stopped suing each other and just brokered “you let us train on your data and we’ll give you huge piles of money” deals. Increasingly, we’ll have two choices: try to form a magic wall around our data and defend it from armies of AI training bots, or get our data in good enough shape that someone would want to pay to train on it.

For a full breakdown of Cesal’s 2026 predictions as well as ongoing insights into the confluence of design, technology, disaster and resilience, visit his Substack and subscribe for free. Featured image, “I, For One, Welcome Our New Subconsultants.” was created by the author using Midjourney.