If Everybody’s Using AI, What’s An AI Design Studio For?

Nothing in my 25 years of teaching architecture prepared me for what I experienced in my AI studio this semester. The technology has advanced so rapidly since my last AI studio that as a tool and a process, it is almost unrecognizable. AI systems today raise questions that legacy drafting and modeling software never raised about authorship, about what design intent means, and about whose aesthetic histories are encoded in training data, which forces us to reconsider the role of the student, the professor, design tools, and even authority. A dedicated AI studio is the appropriate venue to take these questions seriously. My students and I discussed these matters throughout the semester, revealing that many things have now permanently changed and, after this semester, will never be the same.

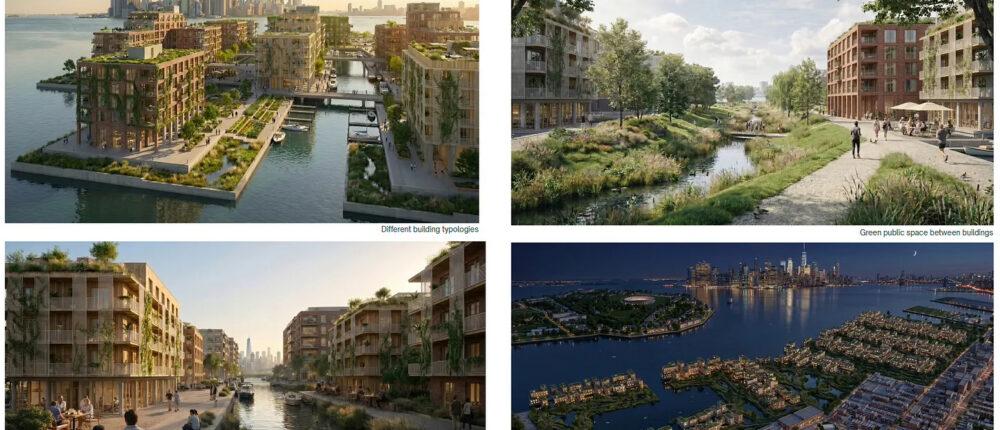

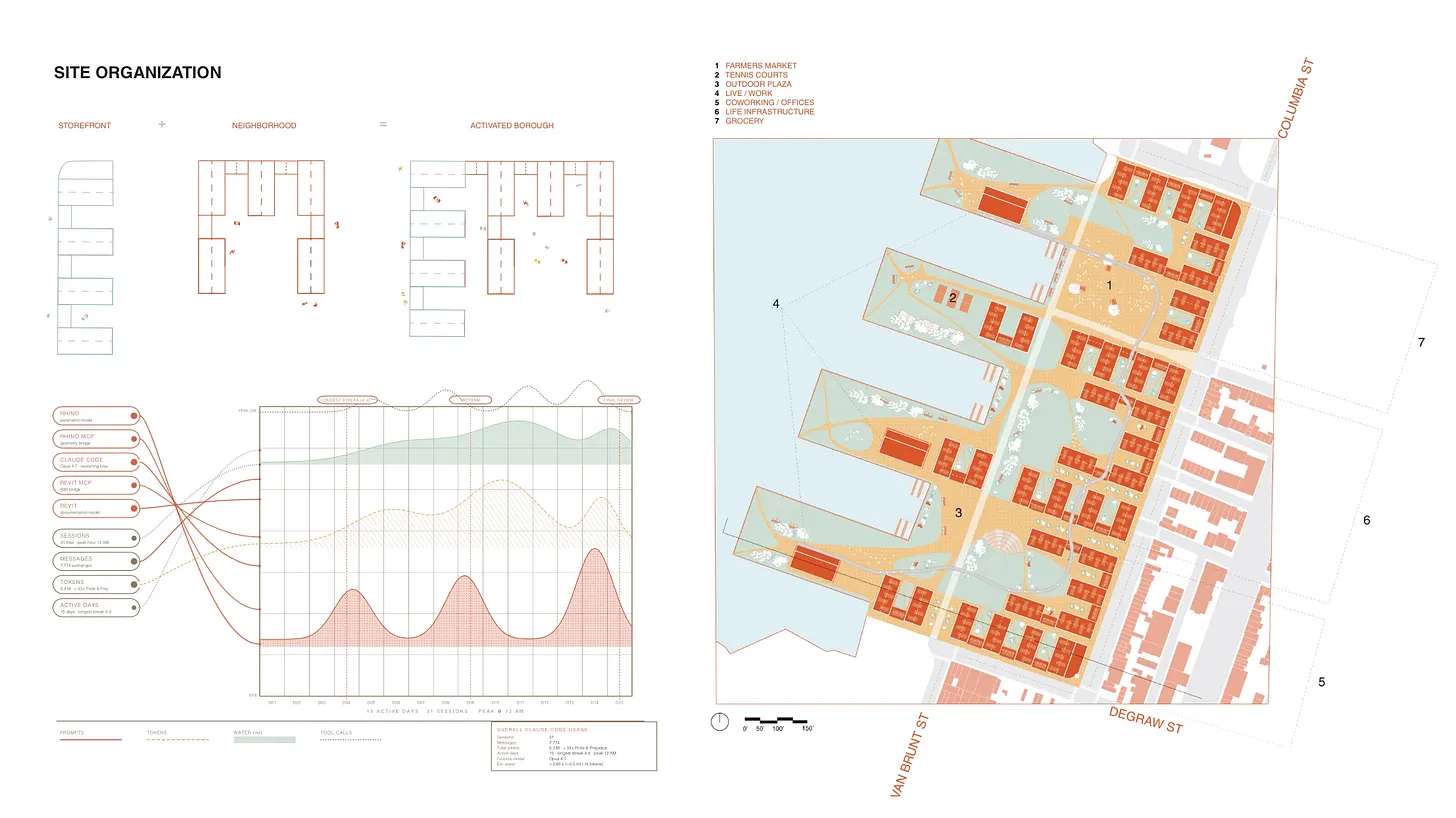

Aerial Site View, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

Aerial Site View, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

The semester-long assignment was complex, by design. I asked graduate students to leverage AI in the redesign of the 122-acre Brooklyn Marine Terminal in Red Hook, New York City, and help solve the affordable housing crisis while doing so—no easy task. The first half of the semester was focused on a vision and master plan (schematic design), and the second half was focused on buildings (design development.)

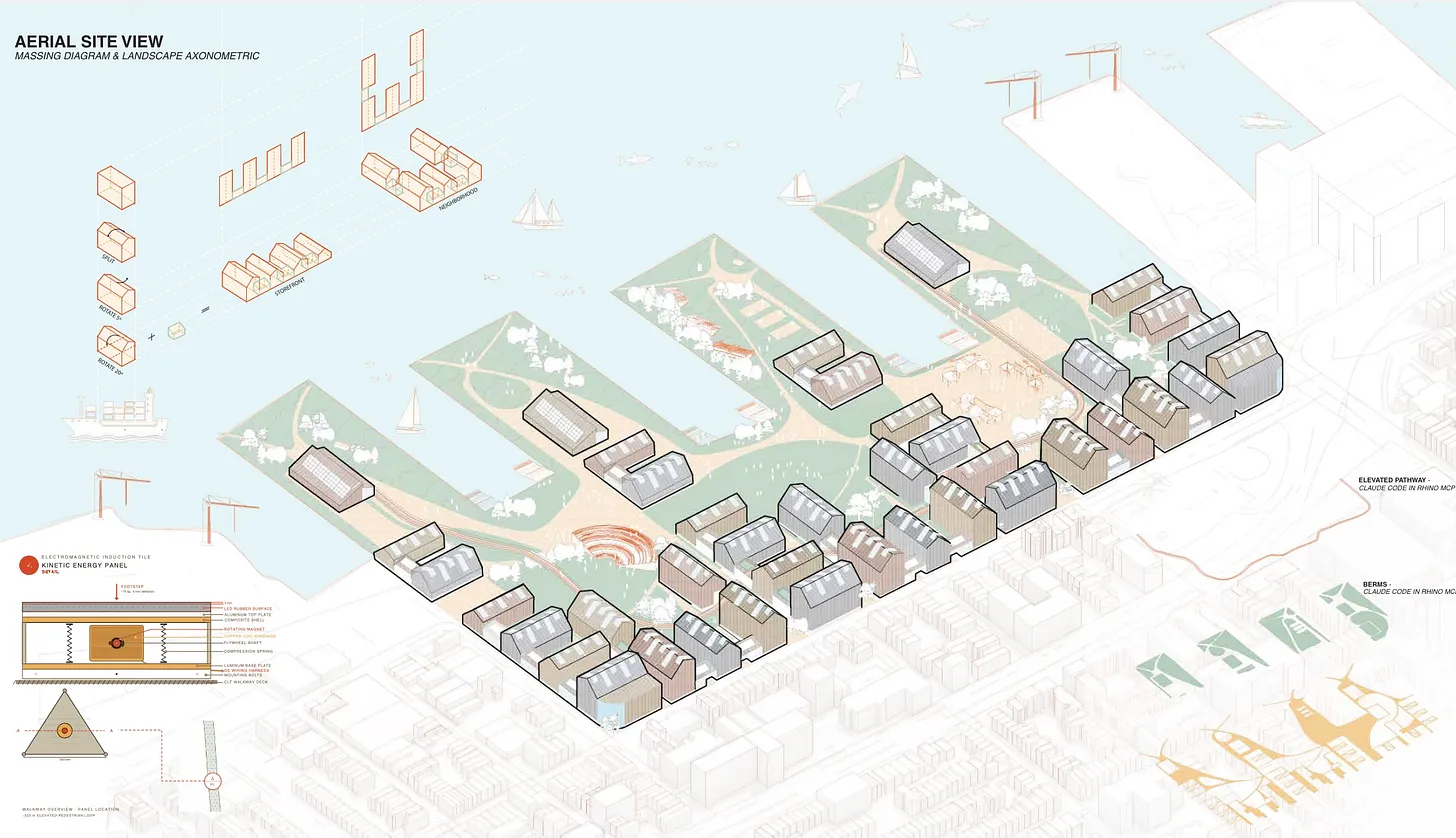

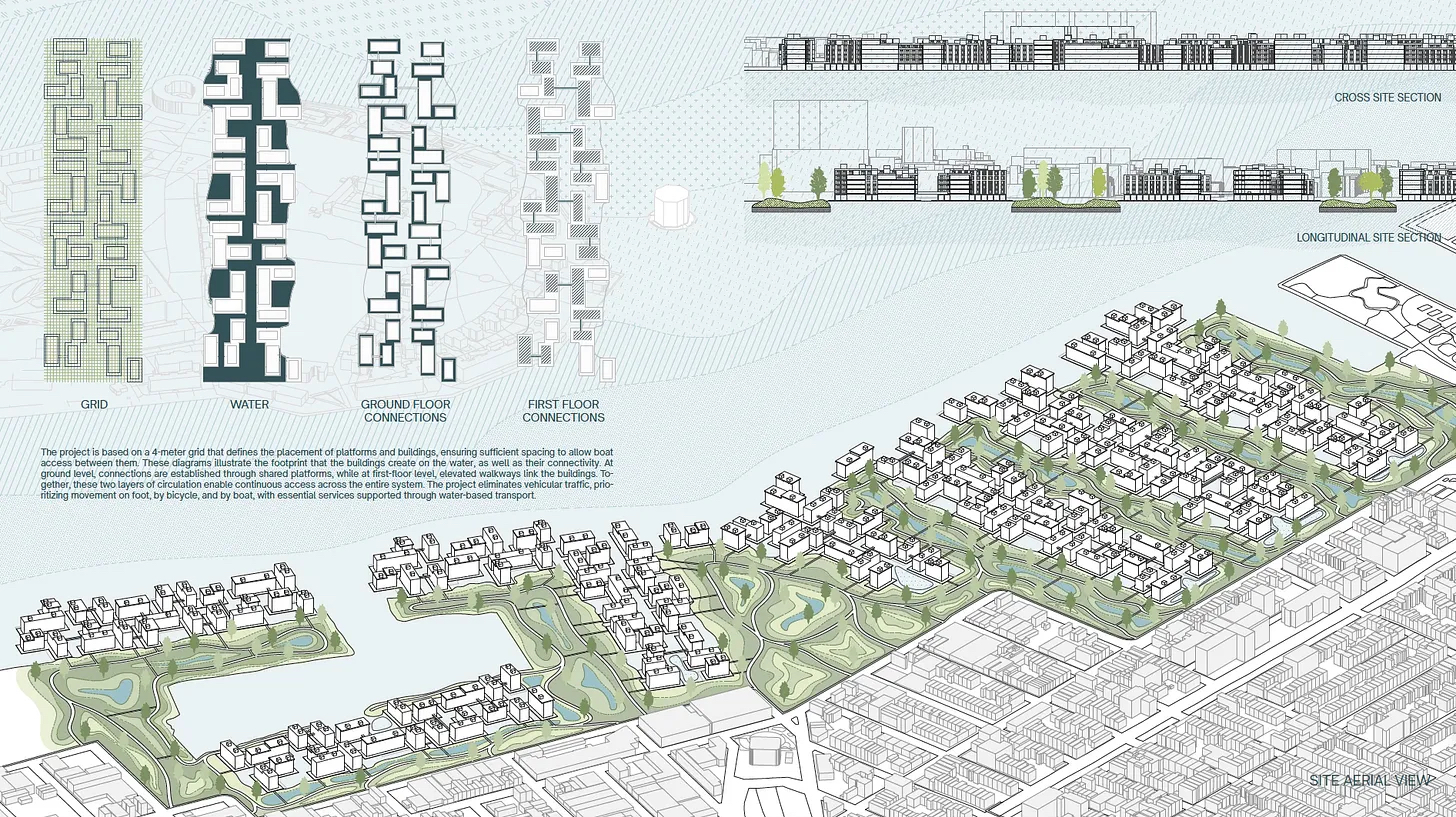

Isometric View, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma. The diagram on the far right indicates liters of water used by the team during the semester’s AI use.

Isometric View, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma. The diagram on the far right indicates liters of water used by the team during the semester’s AI use.

Bright Spots

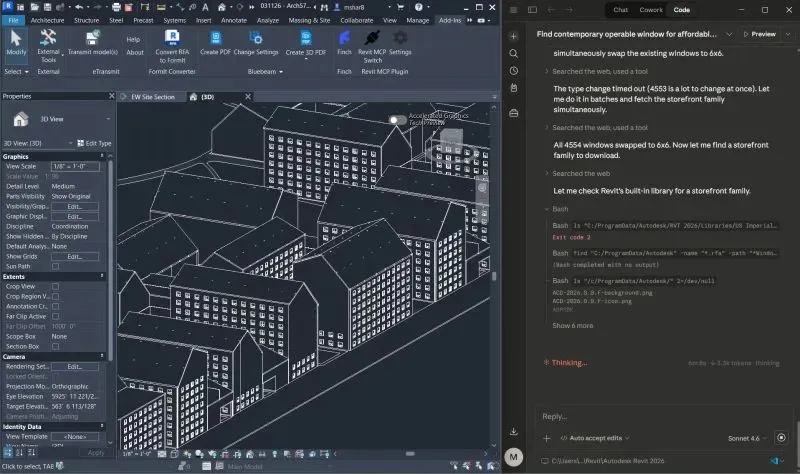

First, the tools. Though our aim was to be tool agnostic throughout the reality was something quite different. Of the 22 AI tools we tested, analyzed, and explored—including working directly with the Finch3D folks in Sweden—what worked best for most students included a near-continuous use of Google Flow, Claude Code (in Revit, Rhino, Grasshopper), and Claude Cowork. None of these tools existed as a consumer product prior to the semester’s start.

I’ll recap here what our students did with Claude Cowork. For some of our students, it is always on in the background, where they can just ask it questions or to do tasks all day long.

Using Model Context Protocols in Rhino and Revit, students leveraged Claude Cowork for drawing production, landscape modeling, creating walkways and pathways, and exterior wall, floor assemblies, and window placement.

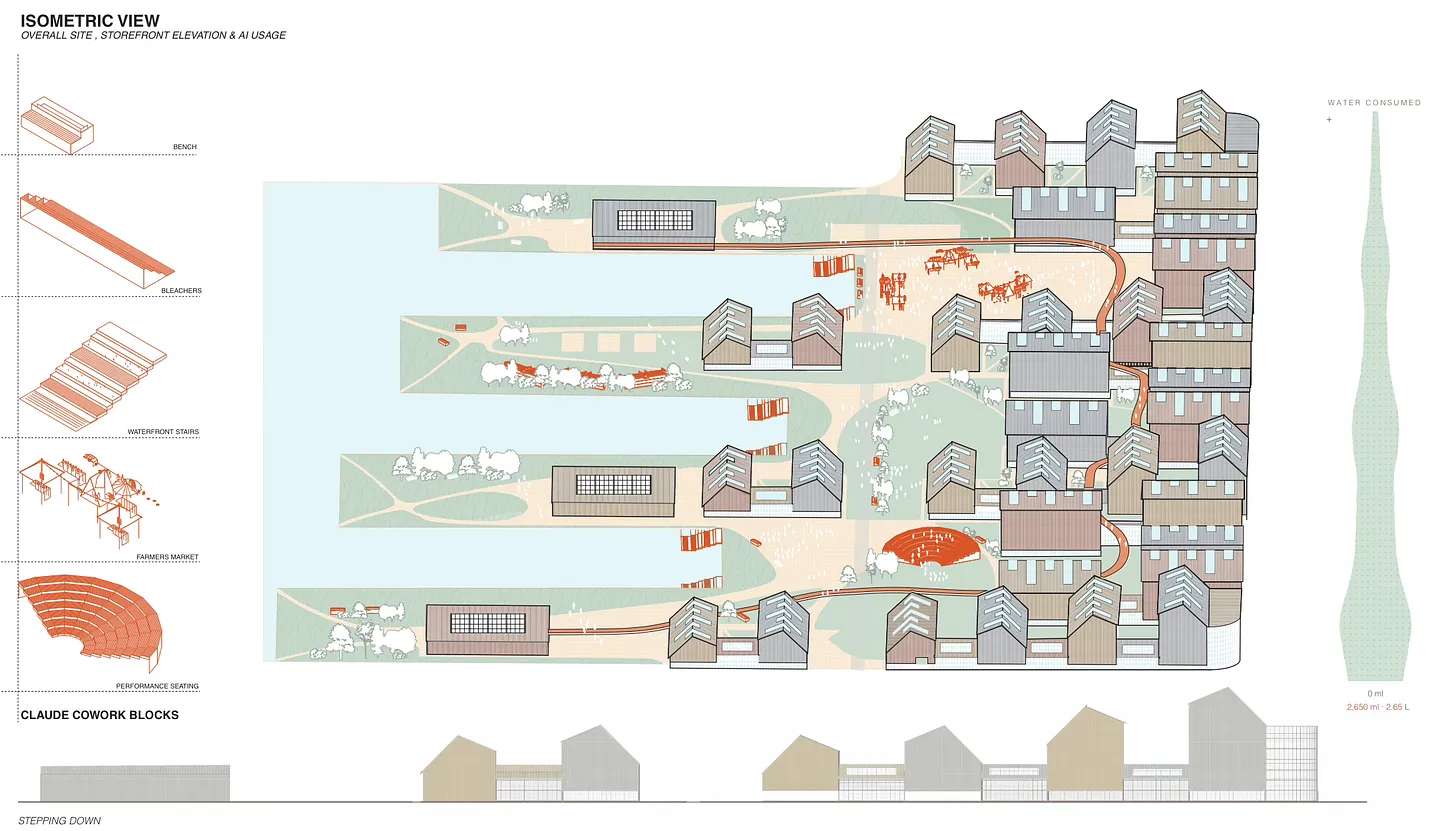

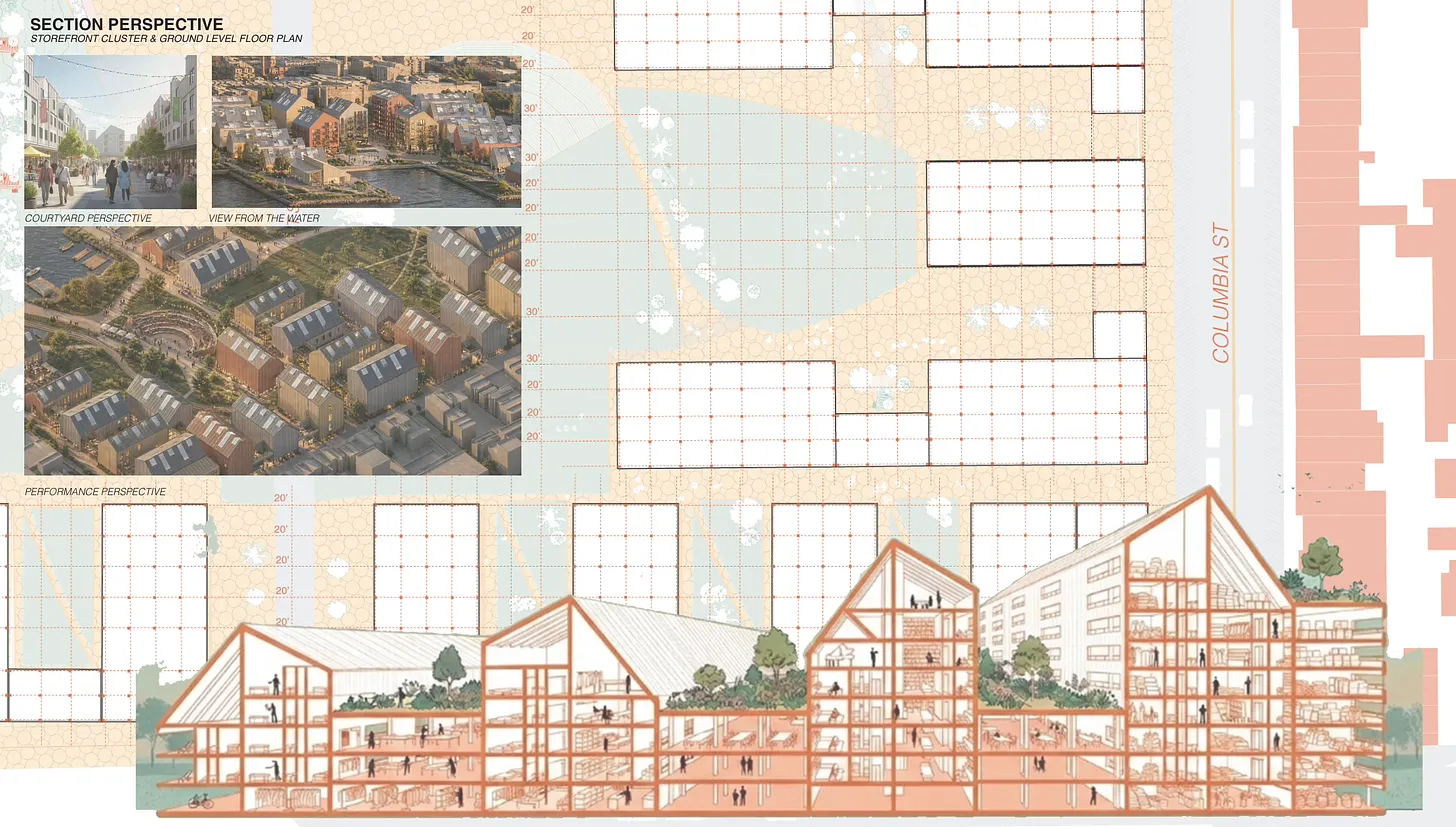

Sectional Perspective, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

Sectional Perspective, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

In Claude Cowork, one team created a project file that contained information on their color palette selection, input data of their personal Claude Code usage, and prompted Claude Cowork to create a diagram that showcased this for their final review presentation. Claude Cowork created this diagram as well as an editable illustrator file.

Claude Code will autonomously recruit one or more agents to help complete tasks. As one of our students, Macy Sharp, posted about her use of Claude Code in the studio: “AI hired an agent, pathways went rogue, and I built a website. Just another week!”

Another team used Rhino MCPs to connect Rhino to Claude, which generated building mass and void iterations; then plugged the output into Grasshopper, generating iterations based on parameters such as wind, daylight, and views; and then gave Claude the .epw file for New York City to use the Ladybug library to redo the massing iterations. As Binathi Karupu Reddy posted online, “The results amazed us! It created terraces between floors, pulled apart units to create dramatic cantilevers and saved each iteration as its own Grasshopper file, ready to bake into Rhino.”

Site Organization, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

Site Organization, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

The time savings for our students who used Claude Code was extraordinary, where students could accurately complete a two-week assignment in 5–10 minutes. The time saved was then applied to address overall project scope, coordination, and quality.

Generative, Probabilistic and Increasingly Agentic

AI systems today raise questions that drafting and modeling software never raised, questions about:

- authorship;

- what design intent means;

- whose aesthetic histories are encoded in training data; and

- the potential displacement of labor.

We discussed all these things throughout the semester. A dedicated AI studio is the appropriate venue to take these questions seriously.

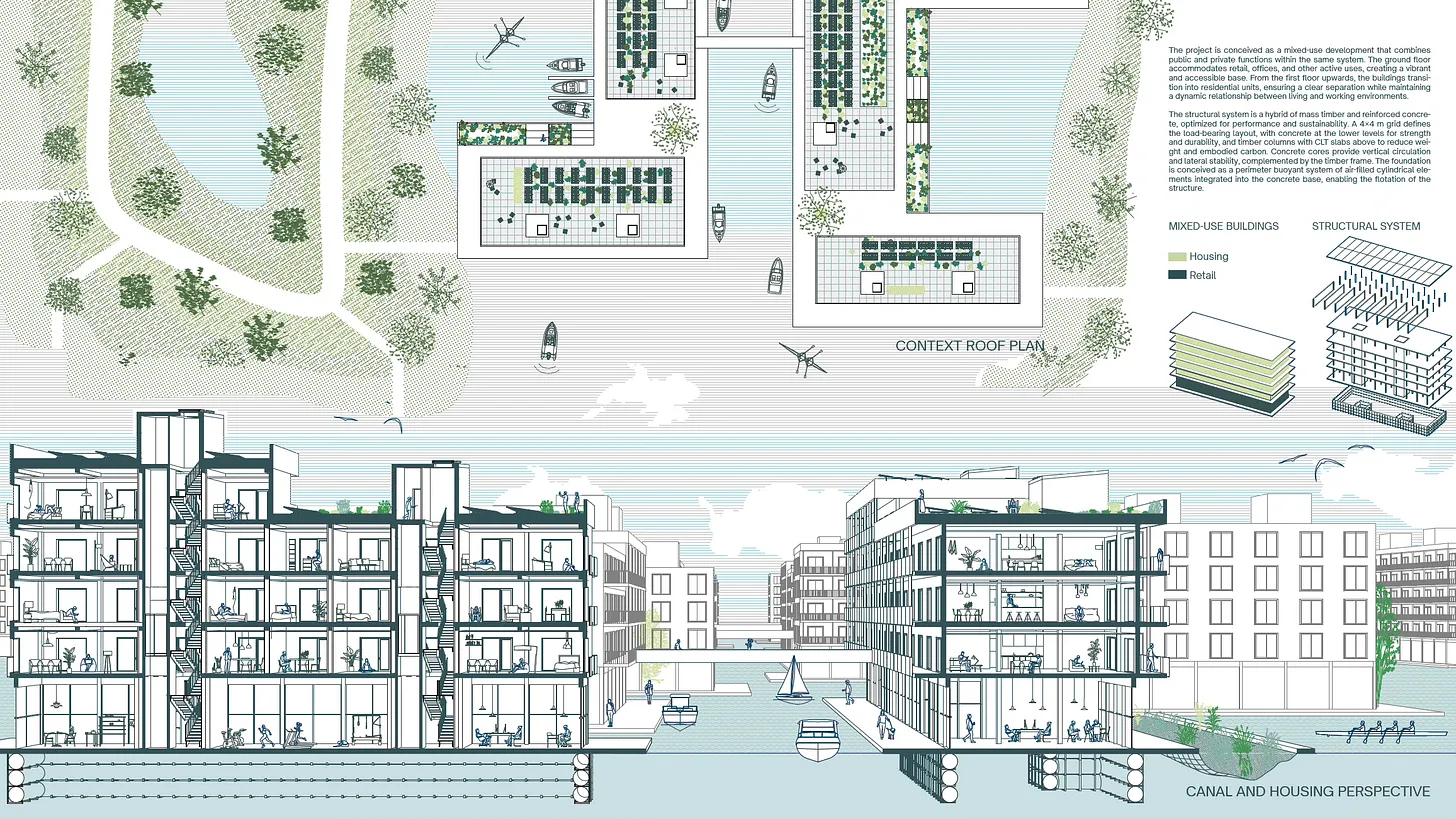

Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

Ethics and Critical Practice

Using AI as the basis for a design studio raises as many questions as it answers. For example, questions of pedagogy and whether and what exactly students are learning when using AI. However, all in all, teams leveraged Claude Cowork to streamline their drawing production while staying informed about resource consumption throughout the process.

Questions About Equity and Access

In terms of access, subscription costs for premium tools can create disparities. Students notice who has access to what. As the instructor, I split AI tool costs with students and could be seen at times handing out fistfuls of $20 bills in the studio. While admittedly weird, there is nothing unethical, unseemly, or illegal about professors providing cash payments to students (I inquired), and I have been doing this for years.

AI takes a toll not only on pocketbooks, but also on natural resources. In response to this, one of our students posted, “Claude Code can take many tokens with the MCP connection to Revit & Rhino, and we have the desire to monitor our usage to recognize the physical resources spent on the project.”

Questions Related to Skill and Workflow

Throughout the semester, we gathered in a circle and repeatedly discussed how we can integrate generative tools with parametric modeling, BIM, and drawing workflows while students are simultaneously learning; when to use AI for ideation vs. representation vs. analysis; how to document and present an AI-assisted process in reviews and portfolios without it looking like cheating or laziness or overly focusing on the tools over the outcomes.

Site Section, Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

Site Section, Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

Questions Concerning Assessment

Students naturally want to know how their work will be graded, so anyone leading an AI studio must provide a clear position on what they’re evaluating. You will want to see evidence that students can prompt effectively, curate, edit, and direct outputs rather than just accept them, and that they understand the model’s limitations. Skeptics, including those who sit in on students’ reviews, worry that AI-native graduates may lack the slow observational and material intelligence that good architecture requires. So they will want to see evidence of this as well.

Building Section, Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

Building Section, Between Edge & Water, Red Hook, Brooklyn, Team 2: Bruno Bescos, Abril Hernandez, Carla Saiz.

The most critical unspoken reviewer question, especially if they are from practice, is: Does this graduate show evidence of judgment or just fluency?

From my experience leading AI studios, I’d recommend evaluating and assessing for judgment, process, critical reflection, and then outcome, because traditional design evaluation frameworks currently on display in our syllabi no longer make sense in this environment.

Questions Concerning Accreditation

NAAB and other global accrediting bodies recognize that student performance criteria were not written with generative AI—let alone agentic AI—in mind, so expect debate on whether AI-assisted work demonstrates student competency or tool competency. This is unresolved for now and can create friction with those who may be unfamiliar with or critical of these tools.

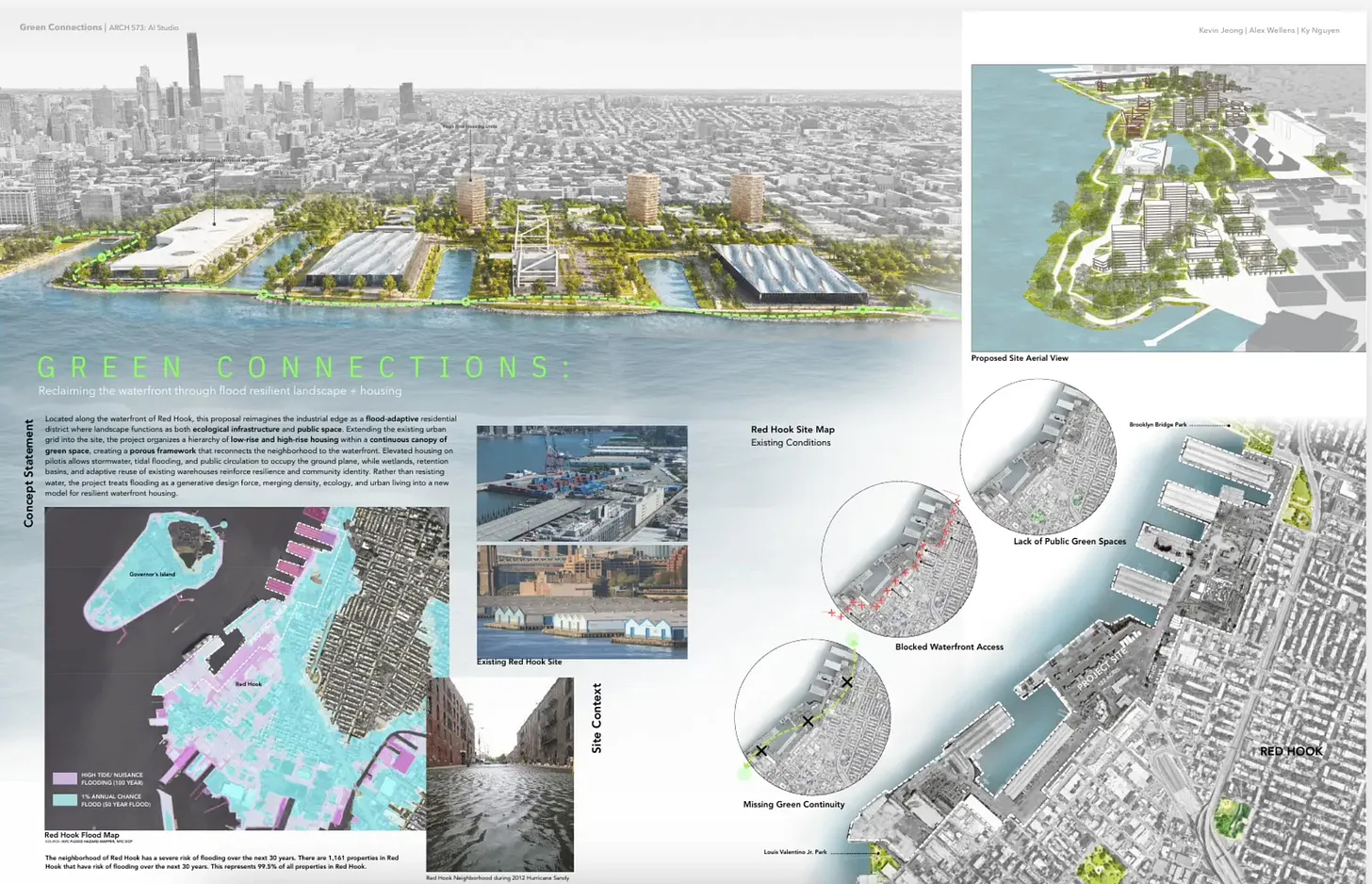

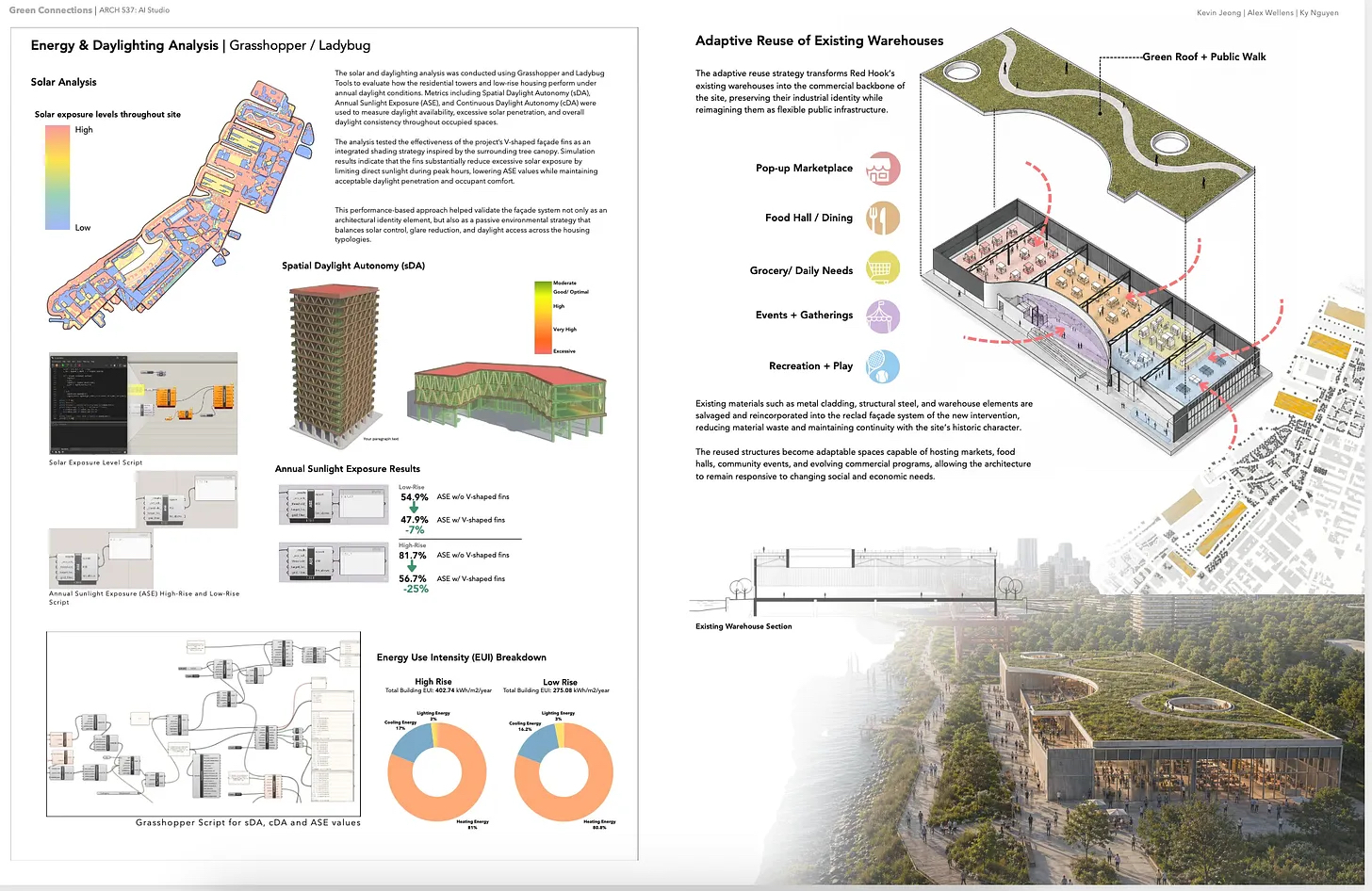

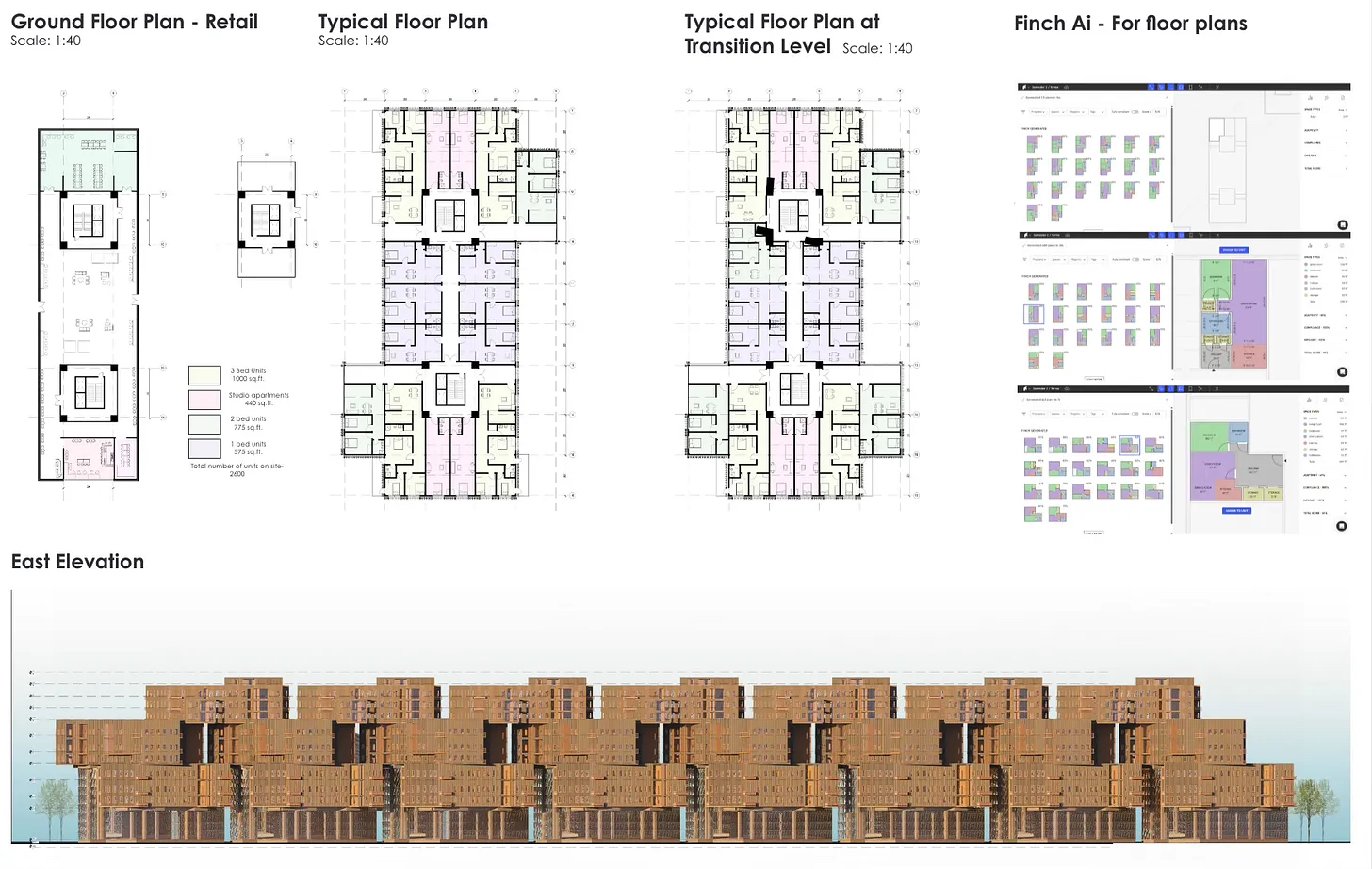

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Who Teaches When Students Make Discoveries on Their Own With AI?

Given where we are with AI, what I’m asking here is: Are architecture professors still needed? If so, what for? I am not just being provocative. I am asking: What exactly does a professor teach in an AI studio?=

What I discovered this semester is that some students, so enamored with their new AI instructor, stopped listening during desk crits. For some professors, this won’t be new behavior. However, this is not just another instance of students distracted by screens, or being checked-out with graduation nigh, but something now officially having permanently changed about the relationship between student, tool, and authority.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Enamored with AI, at times students, at best, impatiently tolerated my feedback during desk crit, indicated by their body language, lack of eye contact, or an engine sound emanating through their teeth as though attempting to go through a red light: Old man in the crosswalk, get out of our way!

Only, I am the old man in the crosswalk.

When students use legacy tools, the professor still offers something the tool can’t provide: judgment about whether the thing was any good and why. The tool was once mute on that question. No longer. AI produces fluent, confident, aesthetically coherent results; more important, unlike prior tools, it is capable of explaining itself.

AI is now on the verge of offering judgment. AI never sighs, never makes students feel like their idea is naive, never has a bad day. Professors, however, do.

What’s new in 2026 is that AI doesn’t just execute, it authors, explains, and responds to critique on its own. Our students not only re-prompted but also uploaded redlined responses that the AI, without complaint, speedily and more exactingly responded to.

Arguably, all tools make students feel powerful. AI makes students feel accompanied. As a result, the psychological dynamic between student and professor has permanently changed. What I have observed this semester is a substitution of the student-professor feedback relationship. When I sit down for a desk crit, students are already inside a critique loop that feels complete to them.

We, the professors, are out of the loop.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Is the Professor’s Authority Diminished or Just Repositioned?

Both. The informational authority of the professor—knowing more precedents, knowing how buildings are detailed, knowing what’s been tried before—is now permanently diminished. Game over. AI knows more architectural history and theory than any professor. It can generate more precedent images in seconds than a professor can recall in a career. Pretending otherwise is delaying the inevitable.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

Green Connections, Red Hook, Brooklyn, Team 3: Kevin Jeong, Alex Wellens, K. Nguyen.

The professor as the primary source of precedents and technical information is probably not a role worth defending. Competing with AI on those terms is a losing position. Similarly, the professor as the person who tells students how to use the tools is no longer the case. Students now surpass their professors—and when they graduate, hiring managers—technically, and that’s fine.

What AI Cannot Do

Cool your heels; breathe deeply. I am happy to report that there are things AI cannot do, and for an architectural education, these have enhanced importance.

Good lecturing is not primarily about information transfer. Likewise, a desk crit is not primarily an information transfer. Think of it instead as an experienced practitioner watching a specific student think, identifying where their thinking gets stuck or goes awry, and then intervening at just the right moment in the right register.

AI cannot (yet) watch you; it only receives what you give it. The perceptive professor sees what the student is not showing: their hesitation, the drawing that got buried, the insecurity of imposter syndrome, the communication cycle not completed, the idea abandoned too quickly.

Studio is a surveillance state: you are being watched. This is good, as this means you, the student, are seen, not invisible. In an AI studio, the desk crit is now a reading of the student, not a reading of the project. This may be hard to accept, but the project is now partly AI’s work.

Tidal Terraces at Erie Basin, Red Hook, Brooklyn, Team 4: Tara Anand, Binathi Karupu Reddy.

Tidal Terraces at Erie Basin, Red Hook, Brooklyn, Team 4: Tara Anand, Binathi Karupu Reddy.

Professors stake their professional judgment and reputation on their assessments. When a professor critiques, their judgment (experience + intuition + knowledge) comes from a person who will be held accountable for it. The transmission of culture that takes place is personal. It happens in conversation, in the silences of a desk crit, in what a professor chooses to draw when they take out a marker and trace at the student’s desk.

AI responds to prompts; it is reactive. The professor’s most important interventions are often unprompted, introducing a response the student didn’t know they needed, connecting the project to something altogether outside architecture, or identifying a problem the student was unable to see.

While no one wants to suffer—and even less so, be the one causing suffering—architectural education has always involved productive frustration, the moment when the easy answer is rejected and the student is asked to go deeper. The professor is the person who makes students uncomfortable in productive ways. AI, always the empathetic pleaser, is constitutionally incapable of such refusal. It will always give the student something. The professor, however, can say: Go further.

Finally, the studio is a community where students learn to evaluate each other’s work, defend positions, and argue about quality. AI cannot (yet) participate in that community.

Tidal Terraces at Erie Basin, Red Hook, Brooklyn, Team 4: Tara Anand, Binathi Karupu Reddy.

Tidal Terraces at Erie Basin, Red Hook, Brooklyn, Team 4: Tara Anand, Binathi Karupu Reddy.

Moving Forward: Does It Make Sense To Teach AI in an Architecture Program?

The argument for letting graduates learn AI later, on the job, underestimates how much the studio needs to be a critical environment. Practice teaches tools as instruments where one gets the output, meets the deadline. AI in architecture raises questions that firms have neither the time nor the inclination nor the incentive to interrogate. If school doesn’t do it, nobody will. School is where students can slow down, ask why, push back, and develop judgment.

Agentic AI in Revit, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

Agentic AI in Revit, Piering Gable, Red Hook, Brooklyn, Team 1: Macy Sharp & Mohna Sharma.

When using AI, it pays for them to slow down, stop, and listen. This is what critical practice looks like. In contrast to many students who use AI in their studio project work, thoughtful and rigorous AI studios are slop-free zones.

Of this I am certain: There was ample evidence of our AI studio as a critical practice everywhere throughout the semester. Does using AI in studio make sense? Yes, emphatically. But how it is used matters.

If AI Generated This, Is It Still My Design?

Perhaps the most important question of all is this: How do you run a rigorous studio when outputs are not deterministic? Especially where the provenance for design outputs is unknown and can never be entirely determined. Our students believed that their outputs represented their design because it was based on their prompts (and successive, iterative re-prompting), and because the outputs were editable, they used the outputs as starting points, never as presentable outputs.

Where do my students stand on this? This much I know: They’ll be asked to justify their work as architecture, not just technology, so moving forward, they’ll need to have a clear answer for what, specifically, architectural thinking AI design practice develops.

How Do I Know When To Trust the AI Output?

According to my students, they trust AI outputs because they only use tools that they have scrutinized, stopping to question at every step whether what they are using is representative of their design intent and vision for the project. They look for signs that cue them as to whether the output is reliable, always critically questioning. They ask themselves: What signs do you look for that you can rely on? And how do you know?

Am I Learning Less by Using This?

I asked our students: This semester, do you feel like you learned less, more or the same as previous studios? Their emphatic answer was that they learned more.

This is also true: In 25 years of teaching studio, I have never seen students more engaged, dedicated, and energized—even excited—about the work they were doing. When work is fun, learning will follow. This has to be considered, too.

Conclusion

Students learning AI need to understand what it optimizes for, what criteria they use and why, what it flattens, and what it cannot see. And then, importantly, develop the critical distance to work with it rather than be worked by it.

For professors who don’t recognize which parts of their authority have been displaced, don’t spend time and energy defending those parts. Instead, deepen what only you can offer. A growth (as opposed to a fixed) mindset is crucial.

My students, not looking up from their monitors, tell me that the desk crit needs to find its new place, one that AI cannot occupy.

One of our students, Tara Anand, said: “During this semester we have been questioning our role as architects and whether AI disrupts how we design. As a team, we think it does, but in the best way possible! Holding onto creative autonomy and vision is a key requisite to working with AI, using it as a tool to make our workflows more efficient, instead of allowing it to ‘take over’ the design. It does not replace architects and designers but rather shifts our role to that of system thinkers. The goal is no longer just the design itself, but also the process that generates it.”

Our future is in their hands. For future AI studios, the question to ask is: Are you teaching tool use or critical practice? A studio that focuses on the former is less valuable than on-the-job training. One that does the latter is irreplaceable.

A somewhat different version of this piece originally appeared in the author’s substack, which can be found here. Featured image generated by Flow AI.