The Liability Question: When AI Designs Buildings, Who’s Responsible?

Artificial intelligence is beginning to shape decisions about the physical environment in ways most people never see directly. Algorithms now assist in evaluating proposals, modeling infrastructure systems, and testing design options for buildings and neighborhoods. As these tools become more influential in shaping the places where we live and work, a practical question emerges: When a design decision is influenced by an algorithm, who is responsible if the result proves to be flawed or harmful?

In architecture, the answer has traditionally been clear. Buildings are designed within a professional framework that assigns responsibility to licensed individuals who must exercise judgment on behalf of the public. This structure exists because buildings shape daily life, from the safety of the spaces people occupy to the character of the streets and neighborhoods they inhabit. When a building is approved for construction, the drawings carry the seal of a licensed architect who is legally responsible for the design. Contracts, permits, and professional liability insurance rely on the assumption that someone can be held accountable for the decisions embedded in a building. The growing presence of AI in design workflows does not remove that structure of responsibility. In many respects, it makes it more visible.

Generative systems can test site configurations, simulate environmental performance, analyze zoning capacity, and model construction costs with a speed that was previously difficult to achieve. These capabilities expand the analytical reach of design teams and allow conflicts to be identified earlier in the process. Because of this, AI is often described as a tool that will reduce risk in the design and construction of buildings. However, this framing misunderstands how risk actually operates in architecture.

Buildings are long-term commitments that must perform across decades of weather, maintenance, ownership changes, and evolving patterns of use. Structural systems must tolerate uncertainty, building envelopes must endure environmental exposure, and site planning decisions shape circulation and infrastructure long after construction is complete. For that reason, architecture has never been organized around the elimination of risk. Instead, the profession developed institutional structures that identify who is responsible for managing risk.

Licensing, contractual agreements, and professional liability insurance all reflect this framework of accountability. When a building is designed and constructed, responsibility for the decisions embedded in it must remain traceable to identifiable professionals expected to exercise informed judgment. AI operates within this framework and makes professional interpretation even more important.

Algorithmic results often appear neutral because they are expressed through numbers and simulations. Yet every computational model reflects assumptions about what should be measured and what should be prioritized.

Algorithmic results often appear neutral because they are expressed through numbers and simulations. Yet every computational model reflects assumptions about what should be measured and what should be prioritized. A system designed to maximize rentable area will reliably produce denser buildings. A model focused primarily on traffic efficiency might prioritize vehicle movement, even if it makes a street less comfortable for pedestrians. These outcomes are not errors; they simply reflect the priorities embedded within the model.

The professional question, therefore, is: Who defines those priorities and how the results are interpreted? AI can generate options and evaluate measurable criteria, but it cannot determine whether those criteria reflect the full range of consequences a building will produce. Someone must still decide whether the objectives guiding the analysis are appropriate and whether the results should be accepted.

This distinction becomes especially important when questions of liability arise. In legal disputes, architects are evaluated according to what is known as the standard of professional care. This legal standard determines whether a professional acted responsibly in making decisions that affect public safety and the long-term performance of buildings. It does not require perfection, but it does require that professionals act reasonably and make informed decisions based on the information available at the time. When a problem occurs, the central question is rarely whether a design could theoretically have been optimized further. The question is whether the professionals involved exercised responsible judgment in reaching their decisions.

AI does not alter this legal framework. Contracts, building permits, and professional insurance policies continue to identify licensed architects as the parties responsible for the design of buildings. When decisions are questioned, it’s the architect who must explain the reasoning behind those decisions and demonstrate that they were reached through appropriate evaluation. A design decision cannot ultimately be justified by saying that a model recommended it. Responsibility remains with the professional who accepted the output and incorporated it into the project.

The growing analytical power of AI makes the distinction between optimization and judgment even more important. Computational models can show that one configuration produces higher density while another improves daylight access, or that one structural approach reduces initial cost while another improves durability. What they cannot determine is which of those tradeoffs is appropriate within a particular social, environmental, or civic context. Deciding what should be prioritized requires interpretation that extends beyond the outputs of a simulation.

Another complication is explainability. Some AI systems produce results through processes that are not fully transparent to the people using them. Architectural decisions must often be explained to regulators, clients, consultants, contractors, and, sometimes, courts. When algorithmic analysis influences those decisions, professionals must still be able to account for how conclusions were reached.

AI therefore does not remove risk from the design process so much as relocate it. Analytical tasks that once required substantial manual effort can now be performed quickly. Design teams can evaluate more alternatives and explore more variables earlier in a project’s development. At the same time, the consequences of misinterpreting computational outputs become more significant because those outputs influence decisions at greater speed and scale. Risk increasingly concentrates at the moment when professionals interpret results, question assumptions, and decide which conclusions are reliable.

Architecture has encountered similar technological transitions before. Each new tool expands analytical capability while increasing the importance of professional oversight. AI follows the same pattern. Greater computational power does not eliminate responsibility. Instead, it intensifies the need for informed judgment about how results are interpreted and applied.

The growing use of AI also raises a related question that extends beyond liability. As computational tools make certain forms of analysis faster and cheaper, market expectations about the speed and cost of design services may begin to shift as well. When analytical capabilities become widely available through software, the perceived value of professional expertise can be misunderstood as interchangeable with the tools themselves. The legal structure of responsibility, however, remains unchanged. Even as technology alters how design work is performed, the obligation to interpret results and accept accountability for the consequences still rests with identifiable professionals.

Maintaining clarity about where responsibility resides is therefore essential not only for professionals but also for the public. Buildings are not simply technical objects; they are civic artifacts that shape safety, accessibility, environmental performance, and the character of shared spaces over decades. Society licenses architects not because they control drawing tools, but because someone must accept responsibility for evaluating competing priorities and making decisions that carry lasting consequences.

AI expands the analytical tools available to that decision-making process. It can reveal patterns, test scenarios, and identify conflicts that might otherwise remain hidden. What it cannot determine is which outcomes are appropriate or which risks are acceptable within a given context. Those judgments remain the responsibility of the professionals entrusted with shaping the built environment. As computational tools become more capable and persuasive, the need for visible and accountable professional judgment does not diminish. It becomes more important.

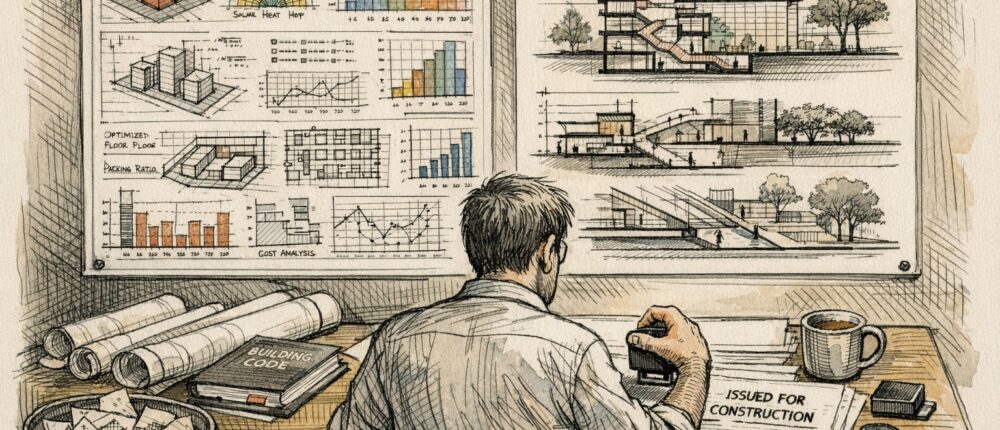

Featured image created by the author, using DALL.E prompts and refined through manual editing.